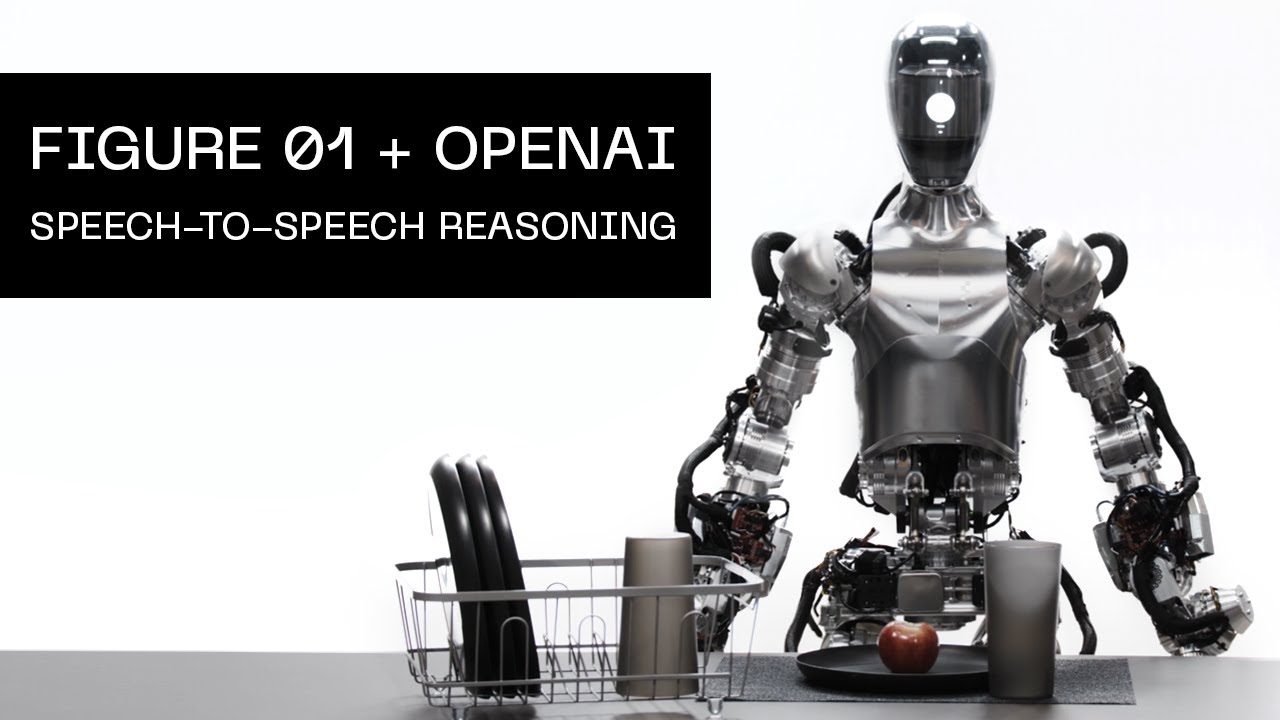

I’m old enough to where this may not end up being my reality. But many of you here will, more than likely, be living with and working alongside humanoid, intelligent androids. Figure is an AI Robotics company building the world’s first commercially viable autonomous humanoid robot. And this is Figure 1…

Yes, I feel the same way, at least I hope that I won’t have to experience all this …

Edit: Typo error from the bad Skynet-Translation-tool ![]()

Again? ![]()

This demo is probably far from what they’re brainless robot can do. They most certainly have done all the tricks they know to get this output.

Google is a great example of company that “embellish” the reality.

Same for they AI telephone assistant that was calling a hair saloon. Still waiting ![]()

Sorry, that was a translation error by DeepL. Didn’t realize it because I’m not quite awake yet … ![]()

What’s not to like?

They did exactly what they wanted!

![]()

![]()

Oh yes. Exactly what I needed in a difficult morning. I’m going to be honest: I don’t believe robots, nor AI, will take over the planet soon. They still have massive challenges to overcome, or so I like to think. I already have trouble thinking about my future and how I would deal with the hurdles that I will face. This isn’t a hurdle I want to think about, honestly.

The planet is already taken over by a few hundreds two-legged, upright walking, organic intelligent (take that with a chunk of salt) creatures called humans. And we have all, in different degrees, made that possible. And in my book it is bad enough.

How much worse can it get?

“Frankly my dear, I don’t give a damn!”

For what your hair Appointment? ![]()

Sorry but they already have just not in the way sci fi made us think it would happen

Arnold voice “We’ll be back…” ![]()

But seriously, it was inevitable. We’re on the bottom of a very, very sharp curve for where we’re heading. Utopia or dystopia, there’s a sense it might be both, and I’m kind of glad I won’t be around either, to see which way it turns. At the point where we have AGI, life will without doubt, change completely. As for ASI, it’s incomprhensible to think that we may end up giving birth to a new species of intelligence.

One day I’ll walk in and it’ll have it’s feet on my desk having a smoke drinking a bottle of Mobile 1 telling me he already completed my day, and that they don’t need me anymore.

One critical factor to consider, when charting the future of Artificial (General) Intelligence, is the development, implementation, and scaling of Quantum Computing (QC). By comparison, while computer processing in general proceeds linearly (for all intents and purposes here), QC proceeds exponentially (2n vs. 2^n, or something like that).

Very few really know how close we are to getting QC processing to a practiceable and sustainable level, but its first applications will likely be used by state actors (probably the governments of United States and China); what’s particularly scary is that it is theorized that QC will very likely be able to crack Transport Layer Security (TLS) with ease… something that - to my knowledge - is currently impossible.

Imagine the implications of combining Artificial (General) Intelligence with Quantum Computing? The consequences could be absolutely dire if it is not properly controlled or at least countered, and if this technology is used uncontested by malicious actors.

Don’t we already have that? Or are you seriously still of the opinion that these current morsels on display come from benefactors for humanity? The whole thing IS! already in the hands of villains!

Good camouflage is everything …

The point isn’t that this technology (or its precursors) aren’t already in the hands of malicious actors (it would be practically impossible to actually prevent that from happening); the point is that adequate countermeasures must be developed and put in place, before said malicious actors use this technology to launch a massive zero-day attack that could have catastrophic civilization-altering consequences for lack of an adequate response. (Hence, the word uncontested.)

In this particular doomsday scenario, the lines separating the white hats from most of the black hats become blurred, when one considers that they share common interests in the face of such an attack (namely, the lives and livelihoods of oneself and loved ones) - for after all, not all enemies of our enemies are our friends.

And it is precisely for these reasons that everything that is “done” to regulate this technology will always be just a show for the people, because the main players are certainly not influenced by concerns. It has been decided, so it will be done. Bill Gates sends his regards … In Germany, we now have a saying that describes what is done to people who react too critically to such narratives:

" Wird der Mensch zu unbequem, ist er ganz schnell rechtsextrem."

Translation:

" If people become too uncomfortable, they quickly become right-wing extremists."

This doesn’t just apply to the topic of KI (AI), but has been a feature of social life as a whole for several years now. Anyone who does not applaud these AI “achievements” without exception will sooner or later be declared an enemy of the state. Sorry, but this is the foundation for Skynet …

So, now you can once again mark my post as inappropriate or whatever.

It is a particularly complex and kafkaesque situation… for even some black hats that may help us to ward off a major cyberattack engineered by AI (especially if backed by Quantum Computing) one day, could soon leverage their advantage to use it against us in this fashion (or in a more advanced and clever way) another day. Or perhaps, that “initial attack” will actually be a headfake/decoy that they can then use as a means to an end. The powerful among them, and their surrogates, will attempt to use fear and other means of coercion to subjugate us all.

There are so many possibilities, that it would take way too long for this thread/forum to war game all the possible scenarios. (That’s best suited for a book or comprehensive report.)

The main players represent an extremely powerful - albeit very small - faction of black hats… but they have ways of getting what they want from those that are unwitting, the useful idiots, and those who may be compelled to contribute for whatever reason (e.g. a quid pro quo of sorts).

Lastly, among even our enemies, there are warring factions among them, which can serve as a buffer of sorts.

With all that said, I don’t have the answers or solutions here (nor would I claim to) - but it’s good to discuss these potentialities nonetheless.

What you describe is reserved for those with something to loose, like reputation, or status. The ordinary joe can go about his business with a smile as long as he/she can rationally navigate the great mire of menudo we call life.

And an old saying about that too:

“Selig sind die Einfältigen …”

“Blessed are the simple …”

I think it’s from one of those old, thick book that these simple-minded people like to carry around with them.