I wrote a simple script to fix it (grub-install from arch wiki), but it only works in arch-chroot from a live USB installer, so I can’t just ran it after each update.

To fix grub boot loader you’ll have to boot on the live ISO and arch-chroot to reinstall grub and then run the proper arch grub update command.

Post the script, so we have an idea of the problem.

Or post other info:

grep -vE "^\s*#" /etc/default/grub | grep .

grep -vE "^\s*#" /etc/fstab | grep . | sed 's/\s\+/ /g'

lsblk -flp

Can you elaborate? I’m already running grub-install in arch-chroot and it appears to fix grub until the next update. I had to

about 7 times already this month. As you might imagine, it’s really annoying.

$ cat /grub-reinstall.sh

#!/bin/sh

mount /dev/nvme1n1p1 /boot/efi

grub-install --target=x86_64-efi --efi-directory=/boot/efi --bootloader-id=endeavouros

I’ve tried running it immediately after pacman -Suy and just before the reboot and it did nothing. Worked in arch-chroot in a live USB though.

$ grep -vE "^\s*#" /etc/default/grub | grep .

GRUB_DEFAULT='0'

GRUB_TIMEOUT='5'

GRUB_DISTRIBUTOR='EndeavourOS'

GRUB_CMDLINE_LINUX_DEFAULT='nowatchdog nvme_load=YES loglevel=3'

GRUB_CMDLINE_LINUX=""

GRUB_PRELOAD_MODULES="part_gpt part_msdos"

GRUB_TIMEOUT_STYLE=menu

GRUB_TERMINAL_INPUT=console

GRUB_GFXMODE=auto

GRUB_GFXPAYLOAD_LINUX=keep

GRUB_DISABLE_RECOVERY='true'

GRUB_BACKGROUND='/usr/share/endeavouros/splash.png'

GRUB_DISABLE_SUBMENU='false'

$ grep -vE "^\s*#" /etc/fstab | grep . | sed 's/\s\+/ /g'

UUID=E68A-7F54 /boot/efi vfat ro,fmask=0137,dmask=0027 0 2

UUID=1303b1d7-0634-4b8b-ae96-c27aed668556 / f2fs noatime 0 1

UUID=28425aa0-6346-4e83-8d92-5bd2fc988d2c /home f2fs noatime 0 0

tmpfs /tmp tmpfs defaults,noatime,mode=1777 0 0

$ lsblk -flp

NAME FSTYPE FSVER LABEL UUID FSAVAIL FSUSE% MOUNTPOINTS

/dev/nvme1n1

/dev/nvme1n1p1 vfat FAT32 E68A-7F54 997,6M 0% /boot/efi

/dev/nvme1n1p2 f2fs 1.16 1303b1d7-0634-4b8b-ae96-c27aed668556 63,7G 36% /

/dev/nvme1n1p3 f2fs 1.15 28425aa0-6346-4e83-8d92-5bd2fc988d2c 611,5G 26% /home

/dev/nvme0n1

/dev/nvme0n1p1 vfat FAT32 SYSTEM A44C-1C94

/dev/nvme0n1p2

/dev/nvme0n1p3 ntfs OS 78244DEB244DAD46

/dev/nvme0n1p4 ntfs RECOVERY 5280A7A380A78C53

/dev/nvme0n1p5 ntfs RESTORE EC8AC7668AC72C40

/dev/nvme0n1p6 vfat FAT32 MYASUS 6EC7-6F50

Added ro to /boot/efi just to see if it’ll stop updates messing with grub. And yes, it’s dual-boot with a Windows installed on a second SSD.

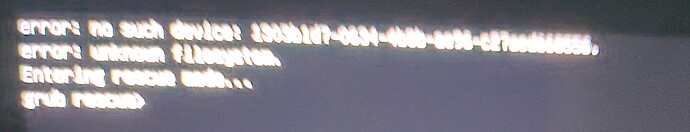

The error complains that it cannot find the root partition, IIUC.

In this case, stay in grub rescue prompt and gather info.

Try to find your root partition, and, if possible, boot manually. Some reading about grub rescue commands will help.

It is possible that BIOS is too quick, or has some setting to assist on discovering, or waiting.

It is possible the BIOS is just bad.

How long did you have this installation without problems, and when exactly did the problem start?

I would try to boot from BIOS quick boot menu. This usually lets some time to BIOS to discover hardware properly.

To clarify:

- The UEFI boot entry that starts the system is executing/booting a small grub image (grub.efi) with minimal capabilities. That image cannot discover the root partition.

- Your re-installation script replaces that image with an identical image, unless grub has been updated during last boot session. (my last grub update was in March)

- It sounds like a hardware/firmware(BIOS) issue to me.

- Or some limitation of grub and the f2fs partition encoding. How were the f2fs partitions originally created? If in Windows, this may have something to do with this. (read grub manual about it, search for

convmvandf2fs)

I started getting this issue about a month ago. It’s hard to say exactly, but I reinstalled it shortly before (maybe a week or two ago) because it turned off randomly.

I think I tried this and it was having the same error.

I don’t remember updating it. Though I had to install custom kernel recently. Basically 6.9 with a few ath12k patches.

They were created in iso installer.

Did you use f2fs in your previous installations?

You may have a failing disk, or a failing file system.

Check your disk.

I don’t have the experience that @petsam has but i wouldn’t use a script. I did this on my system just to try as i have had a couple of experiences when updating kernels lately where it booted up into firmware only with no other entries listed in grub. I didn’t use a path on grub-install because I only have one install on one drive.

/etc/pacman.d/hooks/my-grub.hook

[Trigger]

Operation = Upgrade

Type = Package

Target = linux

Target = grub

[Action]

Description = Refresh grub after upgrading certain packages.

When = PostTransaction

Depends = grub

Exec = /bin/bash -c "/bin/grub-install && /bin/grub-mkconfig -o /boot/grub/grub.cfg"

The code in the post of ricklinux should work after you check and possibly adjust the parameters for the grub-install command.

After RTFM for f2fs, it seems there are some warnings for a grub limitation case.

If the root is not expressed in UUID in grub.cfg, you should replace the value with the relevant partition UUID. (although the message already suggests that grub is looking for an UUID, so probably it is not the case here ![]() )

)

About the proposed hook, it is too high risk for my insecure conscience, to automatize such system action. ![]()

IMHO, after the source of the problem is found, then a decision about how to deal with it can be made ![]() . Each system+user+hardware combination may require a different approach.

. Each system+user+hardware combination may require a different approach.

RTFM is mandatory in such cases.

Why? You don’t like jumping off the grub cliff? ![]()

Edit: I agree with you on this. ![]()

Well, unfortunately I don’t have a way to check this anymore. I definitely used it for /home though.

I hope it is not, this 990 Pro isn’t even that old.

$ sudo badblocks -sv /dev/nvme1n1

Checking blocks 0 to 976762583

Checking for bad blocks (read-only test): done

Pass completed, 0 bad blocks found. (0/0/0 errors)

A bit frustrating since the reason(s) why this happened never came into light ![]()

Post efi menu entries:

efibootmgr$ sudo efibootmgr

BootCurrent: 0003

Timeout: 1 seconds

BootOrder: 0003,0002,0005,0004,0000

Boot0000* Linux Boot Manager VenHw(99e275e7-75a0-4b37-a2e6-c5385e6c00cb)

Boot0001* arch VenHw(99e275e7-75a0-4b37-a2e6-c5385e6c00cb)

Boot0002* GRUB HD(1,GPT,1697b890-0edc-ab4d-858a-8b502c3ff24a,0x1000,0x1f4000)/\EFI\GRUB\GRUBX64.EFI

Boot0003* endeavouros HD(1,GPT,5f5043f5-09ad-4e42-9880-738692ac51a6,0x800,0x82000)/\EFI\ENDEAVOUROS\GRUBX64.EFI

Boot0004* Windows Boot Manager HD(1,GPT,5f5043f5-09ad-4e42-9880-738692ac51a6,0x800,0x82000)/\EFI\MICROSOFT\BOOT\BOOTMGFW.EFI57494e444f5753000100000088000000780000004200430044004f0042004a004500430054003d007b00390064006500610038003600320063002d0035006300640064002d0034006500370030002d0061006300630031002d006600330032006200330034003400640034003700390035007d0000006f000100000010000000040000007fff0400

Boot0005* UEFI OS HD(1,GPT,1697b890-0edc-ab4d-858a-8b502c3ff24a,0x1000,0x1f4000)/\EFI\BOOT\BOOTX64.EFI0000424f

Manually select from UEFI quick boot menu both entries to see if one of them always works after update

They are on different ESP partitions.

Linux Boot Manager normally designates a systemd-boot EFI boot entry.

systemd-boot is the defaults bootloader for EnOS.

Did you go with the default when installing the system?

Did you install Grub (the package) later on and tried to fix your boot issue by installing the Grub’s bootloader?

Edit:

Perhaps the Linux Boot Manager is from another install ![]()