Greetings lovely community,

This is just a quick reminder if you haven’t done it in a while, feel free to run (it may require root privileges):

paccache -r

This command “deletes all cached versions of installed and uninstalled packages, except for the most recent three, by default” ( Arch Wiki ). You don’t have to do this if you don’t want to, (it’s good system maintenance though!) but if you haven’t run the command in a while, you could reclaim a few gigabytes back of storage. Personally, I run the command once a month and I generally reclaim about 1-2GB of space back. I have a small 256GB SSD in my laptop, so getting back a few gigs is a benefit.

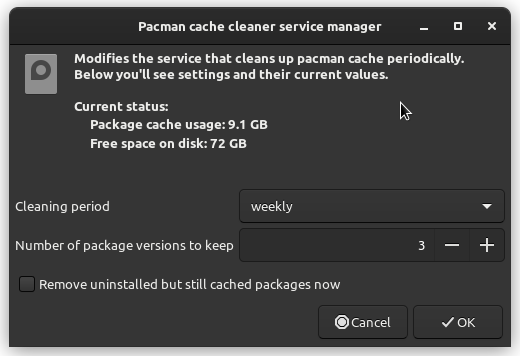

Also from the same wiki link above, if you don’t want to run the command manually, you can have it run automatically once a week via a timer, which is actually what I just learned and decided to try it out for myself. It’s not terribly difficult to enable, but the Arch Wiki doesn’t quite explain easily how to do it for beginners to understand, so I’ll break it down in case anyone is curious to automate the paccache -r command (note: run these commands one at a time):

sudo systemctl enable paccache.timer

sudo systemctl start paccache.timer

systemctl status paccache.timer

The first two commands essentially turn it on and start it up. The third command is optional, but it is a good way to check to make sure it is enable and working as it should show “Active (waiting)” in the output.

Hope this was helpful to anyone. And feel free to share any other relevant system maintenance commands you like to use from time to time that others might benefit to know as well ![]()

Edit: A little bit more about paccache can also be found over in the EndeavourOS wiki: