I’d been toying with the idea of creating an AI assistant that ran locally on my computer for a while, since I already use lmstudio with a few models.

But I also wanted it to have a “3D” avatar and be able to talk.

So, by researching and assisting with an AI (ironic, right?), in my case Gemini, I more or less succeeded this evening.

Anyway, I wanted to make it as simple as possible, especially since I’m no expert in AI, graphics, or anything else. So I found the simplest means to achieve my goal, more or less.

Of course, it lacks complex animation, and in my original intent (but more difficult to achieve in reality), I would have liked to have a personal 3D animated AI assistant that moved around a room, connected to a lmstudio model running locally.

The complex part is missing, but the “brain” runs locally via lmstudio. I chose a small model to avoid overloading the graphics card and leave room for other things like recording video.

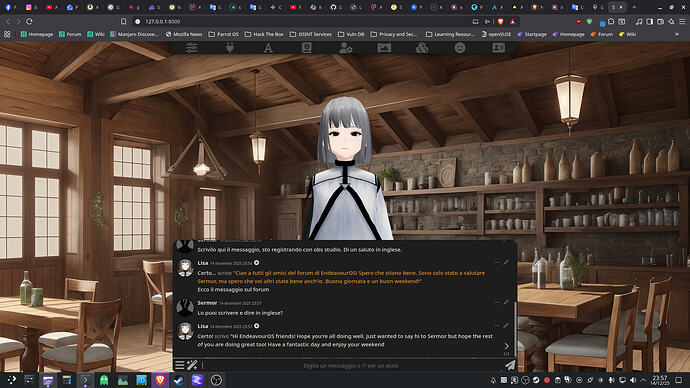

For the “environment,” I used Silly Tavern and Silly Tavern Extra (for the voice). For the avatar, I got it from VRoid Hub, since there are some ready-made ones, and so far, they’re the only ones that work for me (I tried other avatars from elsewhere, but they didn’t display; I think the .vrm file is incomplete somehow).

Well, let’s just say I’m happy with that for now.

Maybe in the next few days I can describe what I did better, if anyone’s interested, and I’ll add it to the open post.

Anyway, here’s a quick goodbye screenshot (I don’t think you can upload videos from your computer, so you’ll have to settle for the screenshot XD).